Artificial intelligence is rapidly becoming embedded across enterprise software. AI systems now power developer copilots, customer support automation, fraud detection engines, financial analytics, and internal knowledge assistants.

While these systems create enormous productivity gains, they also introduce new categories of risk. Traditional application security practices were designed for deterministic software systems. AI systems behave differently. They generate dynamic outputs, interact with external data sources, and increasingly operate as autonomous agents capable of triggering actions.

This shift fundamentally changes the security model.

Enterprises must now treat AI as a high-impact operational system that requires its own security architecture, monitoring, and governance.

Implementing clear AI security best practices is becoming essential for organizations deploying AI at scale.

What Are AI Security Best Practices?

AI security best practices are the technical and operational controls used to protect artificial intelligence systems from attacks such as prompt injection, training data poisoning, model abuse, and sensitive data exposure.

These practices include securing training data pipelines, enforcing strong authentication and authorization for AI APIs, monitoring runtime behavior, validating AI outputs, and applying guardrails around inputs and outputs.

Organizations that adopt these practices can safely deploy AI systems while maintaining control over AI security risk and regulatory exposure.

Why AI Security Best Practices Matter

AI systems expand the attack surface of modern applications.

Attackers can manipulate prompts, exploit model behavior, cause models to reveal sensitive data, or trigger unintended actions through AI agents. Unlike traditional vulnerabilities, these attacks often exploit behavioral weaknesses rather than coding errors.

As enterprises integrate AI into business-critical workflows, security leaders must ensure that AI systems operate safely under real-world conditions.

The goal is not simply detecting vulnerabilities in code. It is ensuring that AI systems behave securely during real interactions.

Top 10 AI Security Best Practices

1. Treat AI Systems as Production Infrastructure

AI systems should never be treated as experimental components once deployed.

Organizations must manage models with the same rigor as production software. This includes version control, deployment validation, rollback capabilities, and change management.

Model updates can alter system behavior in unexpected ways. Operational governance is therefore essential.

2. Secure Training Data and Data Pipelines

Training data defines how an AI model behaves.

If attackers manipulate training data, they can influence the model's responses. This risk is known as training data poisoning.

Enterprises should protect training pipelines by implementing:

- Data provenance tracking

- Dataset validation mechanisms

- Secure ingestion pipelines

- Monitoring for anomalous data changes

Maintaining the integrity of training data is foundational to AI security.

3. Enforce Strong Authentication and Authorization

AI models are often exposed through APIs. These interfaces provide powerful capabilities that must be carefully controlled.

Organizations should enforce strict identity controls including:

- Strong authentication for AI APIs

- Role-based access policies

- Isolation between internal and external AI services

- Comprehensive logging of AI usage

Access to AI services should follow the principle of least privilege.

4. Protect Against Prompt Injection

Prompt injection attacks attempt to manipulate AI models through crafted input.

Attackers may attempt to override system instructions or trick models into revealing sensitive information.

Effective defenses include:

- Separating system prompts from user input

- Sanitizing and validating user prompts

- Restricting model access to sensitive systems

- Using structured prompts rather than raw text inputs

Prompt injection protection should be considered a core element of AI security.

5. Restrict AI Agent and Tool Access

Many AI platforms integrate with tools, APIs, and enterprise services.

If an attacker manipulates an AI agent’s instructions, the agent may perform unintended actions such as executing commands or retrieving sensitive information.

Organizations must implement strict permission models that define what tools an AI agent can access and under what conditions.

AI agents should operate under the principle of least privilege.

6. Monitor AI Systems in Runtime

Static validation alone cannot guarantee AI safety.

AI systems must be monitored continuously during runtime to detect misuse and unexpected behavior.

Monitoring should include:

- Prompt and response logging

- Detection of anomalous interactions

- Monitoring for model abuse

- Detection of sensitive data exposure

Runtime visibility allows security teams to identify risks before they escalate.

7. Prevent Sensitive Data Leakage

AI models may inadvertently reveal confidential information from training data or connected systems.

Organizations must implement safeguards that prevent the exposure of sensitive information.

Recommended controls include:

- Output filtering and redaction

- Data classification enforcement

- Limiting access to sensitive knowledge sources

- Continuous monitoring of generated responses

Protecting enterprise data must remain a central priority.

8. Validate AI Outputs Before Acting on Them

AI outputs are probabilistic and can be incorrect or misleading.

Critical decisions should never rely solely on AI responses.

Applications should validate AI outputs through rule checks, human review, or additional verification mechanisms before triggering automated actions.

9. Implement AI-Specific Security Testing

Traditional security testing tools were not designed to evaluate AI behavior.

Security teams must adopt testing methods that evaluate how AI systems behave during real interactions.

Testing should evaluate:

- Prompt injection scenarios

- Sensitive data exposure risks

- Agent misuse scenarios

- Authorization failures

- Business logic manipulation

Testing AI behavior is critical to identifying real security risks.

10. Establish Enterprise AI Governance

AI security is not only a technical challenge. It is also a governance challenge.

Organizations must establish policies governing how AI systems are developed and deployed.

Governance frameworks should include:

- Responsible AI policies

- Model risk management processes

- Data protection requirements

- Transparency and audit mechanisms

These policies ensure that AI systems operate within defined security and ethical boundaries.

AI Security Risks vs Security Controls

This table illustrates why traditional security testing alone cannot address AI risks. Runtime controls and guardrails are required.

Securing AI Systems with the Aptori AI Gateway

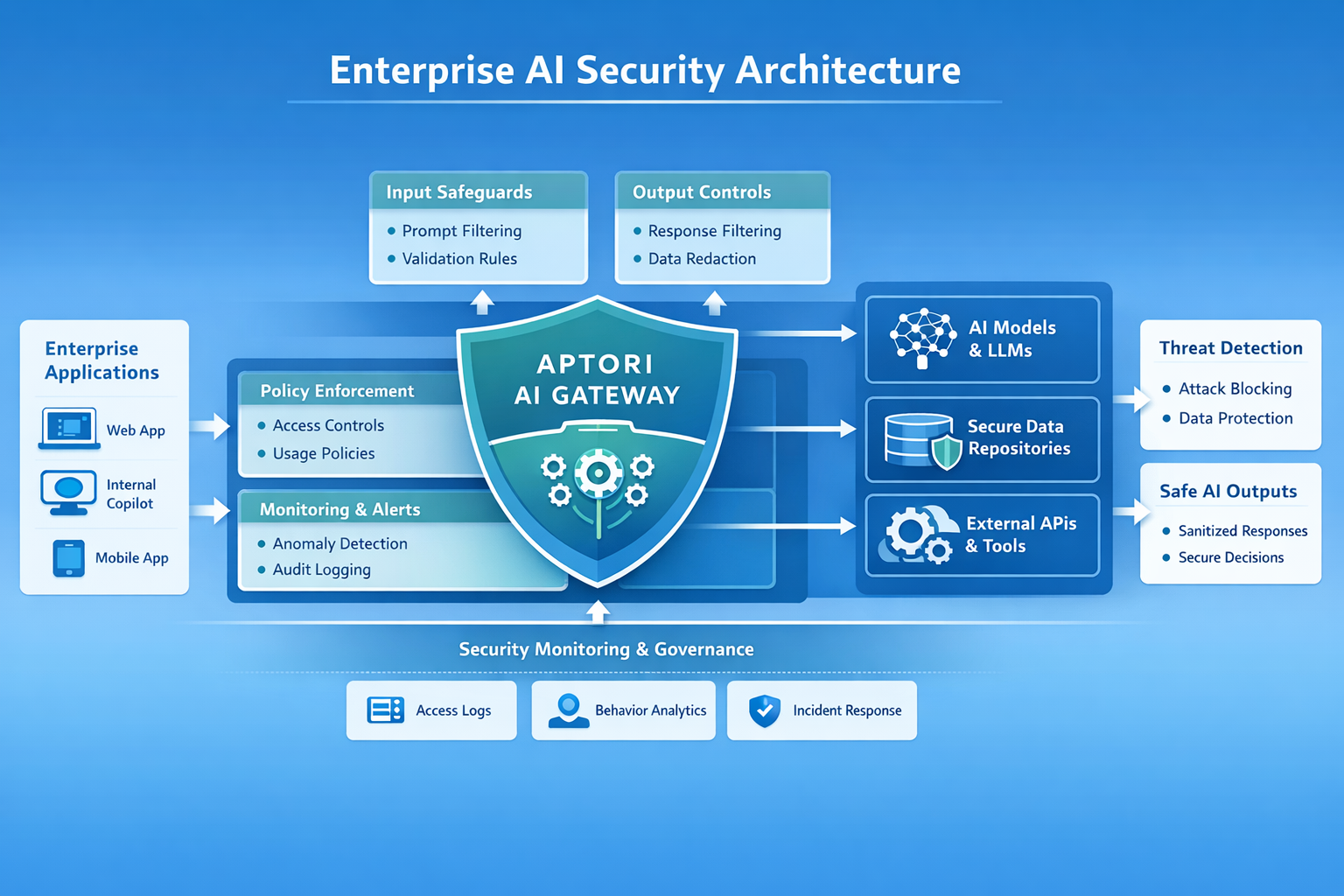

As enterprises deploy AI capabilities across applications, controlling how AI models interact with users and data becomes critical.

The Aptori AI Gateway provides a runtime security layer designed to enforce guardrails around AI systems and large language models.

Rather than relying solely on model-level protections, the AI Gateway introduces centralized control and monitoring for AI interactions.

The gateway sits between enterprise applications and AI models and applies security policies in real time.

Input Guardrails

User prompts can be inspected before reaching the model. The gateway can detect and block malicious inputs such as prompt injection attempts or attempts to bypass system instructions.

This prevents users from manipulating model behavior.

Output Guardrails

Responses generated by the model can be analyzed before being returned to the user.

The gateway can block responses that expose sensitive data, violate policy, or produce unsafe outputs.

Policy Enforcement

Organizations can define policies governing how AI systems are used, including:

- Allowed prompts

- Allowed tools and integrations

- Data access restrictions

- Model usage rules

This ensures that AI systems operate within defined enterprise security policies.

Runtime Monitoring

The gateway provides full visibility into AI interactions. Security teams can monitor prompts, responses, and usage patterns to detect abuse or operational risks.

This visibility enables enterprises to maintain control over AI deployments even as usage scales across teams.

Building Secure AI Systems

AI adoption is accelerating across every industry. With this growth comes a new generation of security challenges that cannot be solved using traditional application security tools alone.

Organizations must implement AI security best practices, monitor AI systems during runtime, and enforce guardrails that ensure models behave safely in real environments.

Solutions such as the Aptori AI Gateway enable enterprises to apply these protections consistently across their AI infrastructure.

Because in AI-driven systems, security is not only about protecting code.

It is about ensuring that intelligent systems interact with the world safely and responsibly.

Frequently Asked Questions About AI Security

What are AI security best practices?

AI security best practices are the policies, technologies, and operational processes used to protect AI systems from attacks such as prompt injection, data poisoning, and sensitive data leakage.

Why is AI security important for enterprises?

AI systems interact with sensitive data and influence critical decisions. Without proper security controls, attackers may manipulate models, expose confidential data, or trigger unintended actions.

What is prompt injection?

Prompt injection is an attack technique where malicious input is used to manipulate an AI model into ignoring its instructions or revealing sensitive information.

How can organizations secure AI applications?

Organizations can secure AI applications by implementing strong access controls, protecting training data, monitoring runtime behavior, validating AI outputs, and deploying guardrail technologies such as AI gateways.

Take control of your Application and API security

See how Aptori’s award-winning, AI-driven platform uncovers hidden business logic risks across your code, applications, and APIs. Aptori prioritizes the risks that matter and automates remediation, helping teams move from reactive security to continuous assurance.

Request your personalized demo today.