Application security teams often experience a confusing contradiction. Security scanners generate thousands of alerts, yet real vulnerabilities still make their way into production systems and frequently appear in breach reports.

This phenomenon can be described as the AppSec Detection Gap.

The detection gap refers to the difference between:

- What security tools detect

- What attackers actually exploit

Understanding this gap is essential for improving application security programs.

What Security Tools Detect

Most application security scanners rely on pattern-based analysis. They search source code or runtime responses for known vulnerability signatures.

Examples include:

- unsanitized input patterns

- unsafe function calls

- missing validation checks

- insecure library usage

This approach works well for detecting well-known vulnerability classes such as SQL injection or unsafe deserialization. However, these techniques focus primarily on code patterns rather than system behavior.

As a result, scanners frequently generate alerts about potential issues that may not be exploitable in the context of the application.

This is one of the primary sources of false positives.

What Attackers Exploit

Attackers rarely exploit isolated lines of code. Instead, they exploit system behavior.

Real vulnerabilities often emerge from interactions across:

- authentication systems

- authorization logic

- API workflows

- business processes

- data flows across multiple services

Large-scale empirical research shows that vulnerabilities frequently evolve over time rather than appearing as a single coding mistake.

A large empirical study titled The Secret Life of Software Vulnerabilities examined more than 3,600 vulnerabilities across over 1,000 open source projects in order to understand how vulnerabilities are introduced, how they evolve, and how long they remain in production systems.

The results revealed that vulnerabilities rarely appear as isolated defects introduced in a single moment of developer error. Instead, vulnerabilities tend to emerge gradually as software evolves.

The study found that vulnerabilities required an average of 4.71 contributing commits before they fully materialized, and more than 60 percent of vulnerabilities involved multiple commits before becoming exploitable.

These vulnerabilities emerge from how software systems behave in practice. This type of risk is difficult for traditional pattern-based security tools to detect.

Where the Detection Gap Appears

Once the difference between pattern detection and behavioral vulnerabilities becomes clear, the detection gap begins to take shape.

Security scanners typically analyze a snapshot of the codebase and search for known vulnerability patterns. They excel at identifying well-defined issues that can be expressed through static rules.

Attackers, however, rarely rely on such simple weaknesses.

Instead, they exploit weaknesses in how systems behave when components interact.

A vulnerability may appear only when a particular API endpoint is accessed after a specific authentication flow, or when authorization logic fails to correctly enforce object ownership across multiple services. Business logic flaws, authorization bypasses, and API abuse frequently arise from workflows that span multiple components of an application.

These types of vulnerabilities rarely appear as obvious code patterns.

They appear as unexpected system behavior.

The result is a structural mismatch between how scanners analyze applications and how attackers exploit them.

Why the Detection Gap Exists

The AppSec detection gap is not simply the result of imperfect tools or poorly written rules. It reflects a deeper mismatch between how automated scanners analyze software and how vulnerabilities actually emerge in modern systems.

Several structural factors contribute to this gap.

Pattern-Based Detection

Most security scanners operate by identifying known vulnerability patterns in source code. If a query looks unsafe or an input appears unvalidated, the tool raises a warning.

This approach works well for well-known vulnerability classes, but it has an important limitation. Many modern vulnerabilities do not appear as obvious code patterns. Authorization flaws, workflow bypasses, and business logic vulnerabilities often look completely normal when individual pieces of code are examined in isolation.

The scanner detects patterns. Attackers exploit behavior.

Limited System Context

Security tools typically analyze code without full knowledge of the system architecture or runtime environment. They cannot easily understand authentication flows, API workflows, or business rules that determine whether an operation is actually safe.

As a result, scanners often flag code that appears risky but is harmless in context, while missing vulnerabilities that only emerge when multiple components interact.

Scalability Tradeoffs

Accurately determining exploitability requires deep program analysis, but such analysis is computationally expensive. To scale across large codebases and CI pipelines, static analysis tools must simplify the problem and rely on approximations.

These approximations inevitably produce uncertainty. When the tool cannot determine whether a vulnerability truly exists, it often reports a warning. This conservative strategy helps avoid missed issues, but it also increases false positives.

Software Evolution

Modern applications evolve rapidly through continuous integration and frequent deployments. Vulnerabilities often emerge gradually as multiple commits interact in unexpected ways.

Empirical research shows that vulnerabilities frequently require several commits before they fully materialize.1

A scanner analyzing any single snapshot of the codebase may see nothing unusual, even though the combination of changes eventually produces a vulnerability.

Distributed Architectures

APIs, micro-services, and distributed systems further expand the detection gap. Many vulnerabilities now arise from interactions between services rather than from isolated code defects.

These weaknesses appear only when the system is exercised through real workflows, something static scanners struggle to model.

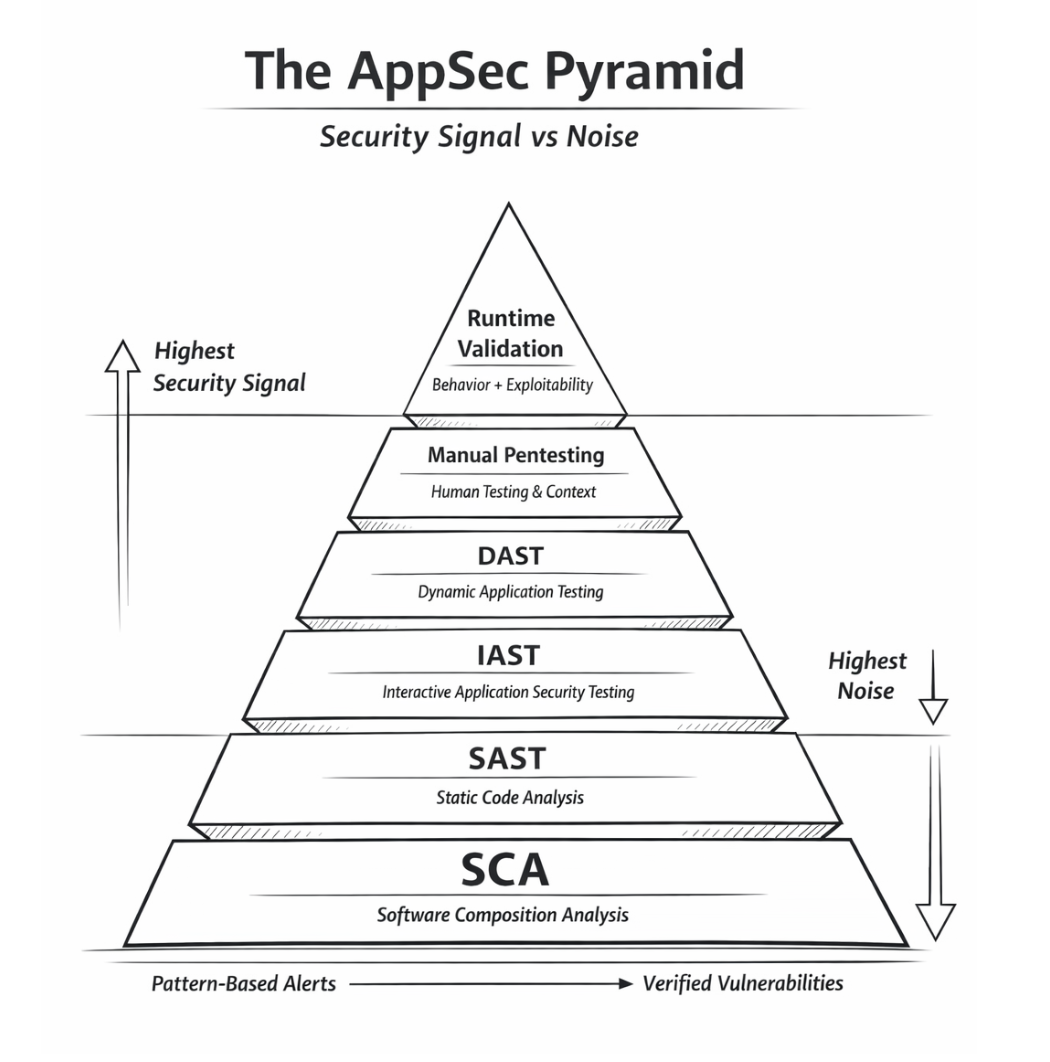

The AppSec Pyramid

Security tools do not produce equal signal.

Pattern detection tools generate the most alerts.

Behavior-driven security validation produces the highest confidence vulnerabilities.

Understanding this hierarchy is key to closing the AppSec detection gap.

Why the Gap Matters for Developers

For DevSecOps teams, the detection gap has direct operational consequences.

When security scanners produce thousands of alerts with uncertain exploitability, developers must invest time evaluating findings that may not represent real risk. Over time, this process can erode trust in security tools and lead to alert fatigue, where developers begin to ignore warnings altogether.

Meanwhile, the vulnerabilities that attackers ultimately exploit may originate from behavioral weaknesses that never triggered alerts in the first place.

This creates a dangerous asymmetry. Security teams may feel confident because scanners report extensive coverage, while attackers continue to discover vulnerabilities that the tools were never designed to detect.

Closing the Detection Gap

Closing the AppSec detection gap requires expanding beyond traditional pattern-based analysis.

Emerging approaches in application security increasingly focus on understanding how software behaves rather than simply how it is written.

Semantic program analysis techniques attempt to track data flows through systems and identify violations of security invariants. Behavioral testing frameworks explore application workflows to uncover vulnerabilities that emerge only during runtime interaction. Hybrid security approaches combine static analysis with dynamic testing and runtime instrumentation to provide richer context for vulnerability detection.

Machine learning techniques trained on historical vulnerability data are also beginning to assist in identifying complex vulnerability patterns that traditional rule systems may miss.

These approaches aim to bridge the gap between code patterns and system behavior.

The Future of Application Security

The lesson emerging from decades of research is clear. Application security tools must evolve alongside the software systems they are designed to protect.

As applications become increasingly distributed, API-driven, and continuously evolving, the vulnerabilities that threaten them will continue to emerge from complex interactions across components and workflows.

Security tools that rely solely on pattern matching will struggle to keep pace with this complexity.

Reducing false positives is not merely about improving developer productivity. It is about ensuring that the signals developers receive correspond to the vulnerabilities attackers are actually exploiting.

The future of application security will depend on closing the AppSec detection gap.

And doing so will require security technologies that understand software behavior as deeply as attackers do.

References

- Iannone, E., Guadagni, R., Ferrucci, F., De Lucia, A., Palomba, F.

The Secret Life of Software Vulnerabilities: A Large-Scale Empirical Study.

IEEE Transactions on Software Engineering. - Chen, S. et al.

Comparison and Evaluation on Static Application Security Testing (SAST) Tools for Java.

Proceedings of ESEC/FSE, 2023. - Meng, N. et al.

An Empirical Study on the Effectiveness of Static C/C++ Analyzers for Vulnerability Detection.

ISSTA, 2022. - Zhang, H. et al.

An Empirical Study of Static Analysis Tools for Secure Code Review.

arXiv:2407.12241. - Li, Y. et al.

An Empirical Study of False Negatives and Positives of Static Code Analyzers From the Perspective of Historical Issues.

arXiv, 2024. - Wedyan, F., Alrumny, A., Bieman, J.

The Effectiveness of Automated Static Analysis Tools for Fault Detection and Refactoring Prediction.

ICST, 2009. - Beller, M. et al.

How Many of All Bugs Do We Find? A Study of Static Bug Detectors.

ASE, 2018.

𝗠𝗼𝗱𝗲𝗿𝗻 𝗔𝗽𝗽𝗦𝗲𝗰 𝗵𝗮𝘀 𝗮 𝗻𝗼𝗶𝘀𝗲 𝗽𝗿𝗼𝗯𝗹𝗲𝗺.

Security scanners generate thousands of alerts.

Yet the vulnerabilities that lead to breaches often bypass them entirely.

This is what I call the 𝗔𝗽𝗽𝗦𝗲𝗰 𝗗𝗲𝘁𝗲𝗰𝘁𝗶𝗼𝗻 𝗚𝗮𝗽.

Research across thousands of vulnerabilities shows:

• 𝟳𝟲% of scanner warnings are unrelated to real vulnerabilities

• 𝟴𝟳% of real vulnerabilities are missed by scanners

• vulnerabilities often require multiple commits to emerge

The reason is simple.

Take control of your Application and API security

See how Aptori’s award-winning, AI-driven platform uncovers hidden business logic risks across your code, applications, and APIs. Aptori prioritizes the risks that matter and automates remediation, helping teams move from reactive security to continuous assurance.

Request your personalized demo today.