Application security programs today face a paradox. Organizations are deploying more security tools than ever before, yet many developers are paying less attention to their findings.

The problem is not developer apathy. Most engineering teams understand the importance of security and want to build resilient systems. The real problem is something more subtle but far more damaging.

Too many security alerts lack credibility.

When security tools repeatedly report issues that turn out to be irrelevant, non-exploitable, or impossible to reproduce, developers gradually begin to ignore them. Over time, security alerts begin to resemble the classic fable of the boy who cried wolf. The more often warnings turn out to be false alarms, the less likely people are to react when the warning is real.

This phenomenon can be described as developer trust erosion, and it is quietly undermining the effectiveness of application security programs across the industry — and why platforms like Semantic Runtime Validation are built to produce proof, not pattern guesses.

The False Positive Problem in Modern AppSec

Security scanners have become a standard component of modern development pipelines. Static analysis tools, dynamic scanners, dependency analyzers, and API testing platforms continuously analyze code and infrastructure for weaknesses.

These tools play an important role in improving security hygiene. However, many of them operate primarily by detecting patterns associated with vulnerabilities rather than verifying actual exploitability.

For example, scanners commonly flag:

• SQL queries constructed using user input

• Potential unsafe deserialization patterns

• Use of outdated libraries

• Cryptographic functions considered weak

• API endpoints that may expose sensitive objects

These patterns are useful indicators of potential risk, but they do not necessarily confirm that an attack is possible.

As a result, security tools often generate alerts that require developers to manually determine whether a vulnerability actually exists.

Numerous studies have highlighted the scale of this problem.

The false positive crisis affecting modern AppSec tools is well-documented: automated scanners frequently produce false positive rates between 30 percent and 70 percent, depending on the environment and configuration. The underlying cause is structural: AI code generation is changing the economics of AppSec faster than tool-based programmes can respond. Academic research examining developer behavior has also shown that engineers often abandon static analysis tools when the cost of investigating alerts outweighs the perceived benefit.

Over time, developers learn that many alerts are not actionable.

And once that perception forms, trust begins to erode.

The Psychological Impact on Engineering Teams

Software developers operate under constant pressure to deliver features, maintain reliability, and respond to operational incidents. Security alerts compete directly with these priorities.

When alerts repeatedly prove to be harmless, developers naturally begin to treat them as background noise.

Several predictable behavioral patterns emerge.

First, security findings become lower priority in the development backlog. Teams defer remediation work until compliance deadlines or security audits force action.

Second, alerts are dismissed more quickly. Developers assume that the next finding is likely another false alarm.

Third, security tools are run primarily to satisfy governance requirements rather than to discover real risk.

This behavior closely mirrors the phenomenon known as alert fatigue, which has been widely documented in healthcare systems. When clinicians receive excessive monitoring alerts that frequently prove irrelevant, they begin ignoring warnings altogether. The result is that important signals can be missed.

In application security programs, the same dynamic occurs.

Critical vulnerabilities may exist, but they are hidden within a flood of alerts that engineers have learned to distrust.

Why Pattern-Based Security Tools Produce Noise

The root cause of developer trust erosion lies in how most security tools analyze software.

Traditional scanners rely heavily on pattern recognition. Static analysis engines examine code structures that resemble known vulnerability classes defined in frameworks such as MITRE CWE. Dynamic scanners attempt to trigger generic attack patterns against running applications.

While these techniques are useful for detecting common weaknesses, they often lack the context necessary to determine whether an attack path actually exists.

Important questions remain unanswered:

• Can an attacker reach the vulnerable code path?

• Is the input actually controllable by external users?

• Do authorization checks prevent access to the object?

• Does the system architecture neutralize the risk?

• Can the vulnerability be reproduced in a real execution flow?

Without this context, scanners frequently produce theoretical vulnerabilities rather than confirmed exploit paths.

Developers then become responsible for investigating each alert to determine whether it represents real risk.

This investigative burden is one of the primary reasons security alerts are ignored.

The Hidden Cost of Security Noise

Developer trust erosion has consequences that extend far beyond inconvenience.

When engineering teams begin to distrust security alerts, the effectiveness of the entire AppSec program declines.

Security teams gradually lose credibility within engineering organizations. If previous alerts proved irrelevant, developers may question whether future findings are meaningful.

At the same time, genuine vulnerabilities may go unnoticed. High-impact issues can become buried within large volumes of alerts that engineers have learned to dismiss.

In some organizations, security scanning eventually becomes little more than compliance theater. Tools continue to run, reports continue to be generated, and dashboards continue to show activity, but the findings no longer influence engineering decisions.

Security exists, but it is no longer trusted.

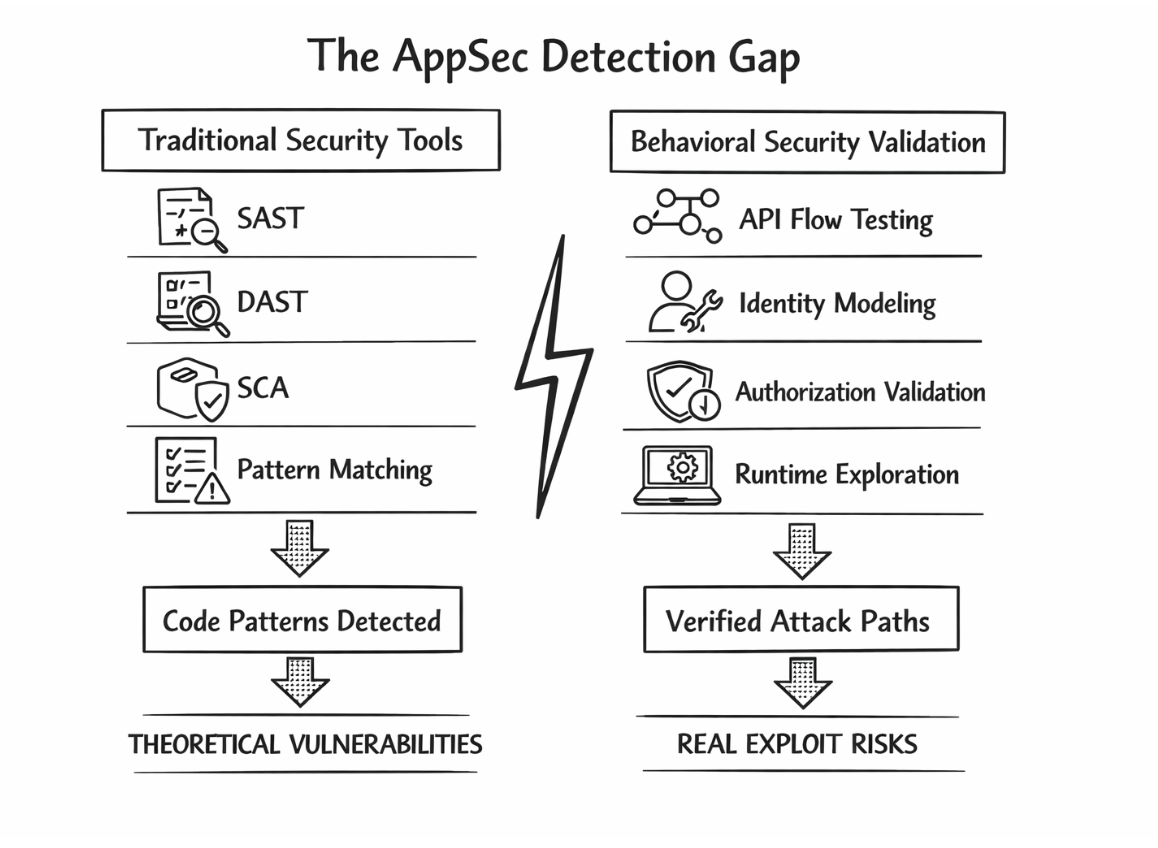

The AppSec Detection Gap

At the heart of the trust problem lies a deeper technical issue known as the AppSec detection gap.

Traditional security tools are designed to detect weakness patterns in code. Attackers, however, exploit behavioral weaknesses in systems.

Many of the most damaging security incidents today are not caused by simple coding mistakes. Instead, they emerge from complex interactions between components.

Examples include:

Broken Object Level Authorization (BOLA)

Business logic vulnerabilities

Authorization inconsistencies across micro-services

API workflow abuse

Identity misconfigurations

These vulnerabilities do not appear as simple code patterns. They emerge only when systems are exercised through real user flows and authorization relationships.

This gap between pattern detection and behavioral validation is one of the main reasons modern security scanners struggle to detect the vulnerabilities that matter most.

Toward Security Signals Developers Can Trust

Restoring developer trust requires a shift in how security tools generate alerts.

Instead of asking whether code resembles a known vulnerability pattern, security platforms must demonstrate whether an attack is actually possible.

This requires analyzing how applications behave during real interactions.

Modern approaches increasingly focus on validating:

Identity relationships

Object ownership models

Authorization enforcement

API workflows

Cross-service interactions

By exploring how systems behave under real execution flows, security tools can identify vulnerabilities that represent actual attack paths rather than theoretical weaknesses.

When alerts include clear evidence, reproduction steps, and exploit context, developers respond very differently.

Instead of ignoring the alert, they treat it as an engineering task.

Trust begins to return. Semantic Runtime Validation is what makes that proof possible - replacing pattern guesses with behavioral evidence that developers can act on - because runtime is where attacks happen, and proof only exists there. Measuring the programme impact of this shift is what the AppSec ROI Framework is built for.

Rebuilding Trust Between Security and Developers

Security programs succeed only when developers believe that security findings are worth fixing.

When alerts are accurate and actionable, developers treat them the same way they treat production bugs. They investigate them, reproduce them, and resolve them.

When alerts are noisy and unreliable, the opposite occurs.

Developers stop listening.

The future of application security will not be determined by how many alerts tools can generate. It will be determined by how effectively security systems produce credible signals that developers trust.

Because once security becomes cry wolf, even the real wolves may go unnoticed.

Take control of your Application and API security

See how Aptori’s award-winning, AI-driven platform uncovers hidden business logic risks across your code, applications, and APIs. Aptori prioritizes the risks that matter and automates remediation, helping teams move from reactive security to continuous assurance.

Request your personalized demo today.