The Case for Offensive Runtime Validation: Finding Weaknesses Before Attackers Do

In 2026, vulnerabilities enter pipelines at AI-speed. Security teams still move at human-speed. That asymmetry is not a temporary inconvenience. It is a structural problem, and it is getting wider.

The tools designed to close that gap were built for a different era. And the gap they cannot cross is not a gap in coverage, or headcount, or process maturity. It is a gap in where security is measured versus where attacks actually occur.

Attacks do not happen in code repositories, scan reports, or testing environments. They happen in runtime, where real identities interact with real systems under real conditions. When a breach is analyzed in detail, it rarely traces back to a single isolated flaw. It reveals a sequence of interactions the system allowed, often across multiple services and layers of logic. The system behaved in a way that made the attack possible, and that behavior only exists in runtime.

Offensive Runtime Validation is not about catching an attacker in the act - that is the job of WAFs and EDR. It is about proactively exercising the live system the way an attacker would, finding and closing exploitable paths before they are ever used. That distinction matters for everything that follows.

The Limits of Tool-Centric Security

The foundational tools of application security were designed for a different generation of systems. Extend the first noun - Static analysis was built to reason about code structure and identify known vulnerability patterns before execution." Dynamic testing extended visibility outward. Penetration testing added human intuition, enabling the discovery of attack paths that automated tools could not easily detect.

These approaches remain relevant. They are no longer sufficient on their own.

Modern applications are distributed systems composed of APIs, identity providers, microservices, and shared data layers. Extend the interaction sentence — "It is determined by how those components interact — the same behavioral gap that makes business logic vulnerabilities the hardest class of risk to detect. Data flows traverse boundaries that are not visible from a single vantage point. Sequences of requests, rather than individual calls, define the actual behavior of the system.

Under these conditions, traditional tools lose fidelity. Static analysis cannot determine how authorization behaves across services under real identities. Dynamic scanners are limited to the paths they are configured to explore. Penetration tests provide valuable insight but are inherently episodic, capturing a moment in a system that is continuously changing.

The result is visibility that is broad but not definitive. Teams can identify potential weaknesses. They cannot reliably determine which ones are actually exploitable.

The Backlog Reflects Uncertainty, Not Scale

The impact of this limitation is most visible in the findings backlog that nearly every security team struggles to manage. Findings accumulate faster than they are resolved, prioritization becomes increasingly complex, and development teams engage selectively based on competing demands.

This situation is often described as a resource problem. In practice, it is a signal problem.

Here is the part that makes it worse:

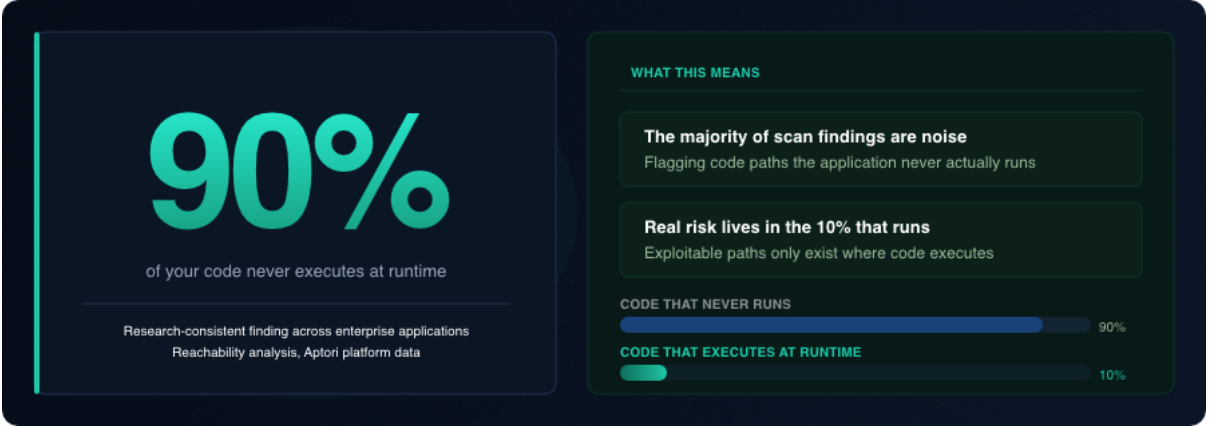

"If 90% of your code never runs, 90% of your static analysis findings are likely chasing ghosts."

Research consistently shows that roughly 90% of code never executes at runtime. That means the majority of findings generated by static analysis and pre-deployment scanning are flagging code paths the application never actually runs. They are, by definition, irrelevant to the real attack surface. Extend the sentence - Teams are spending remediation cycles on theoretical exposure in dead code while genuinely exploitable paths in the live system go unverified — the structural source of the false positive crisis in AppSec tools. The underlying cause is structural: AI code generation is changing the economics of AppSec faster than linear team scaling can address.

Engineering teams routinely respond to production issues with urgency and clarity. When a system fails in a way that affects users or business operations, the problem is immediately understood and remediation follows quickly. Security findings, by contrast, are frequently delivered without that level of clarity. They describe conditions that could be exploitable, but they rarely demonstrate how exploitation would occur within the context of the system as it actually operates.

Without a verified attack path, a finding remains hypothetical. Developers are asked to act on possibility rather than certainty, and in environments where effort must be carefully allocated, hypothetical risk is consistently deprioritized.

Extend the final sentence - It grows because most of what they are handed is noise, and they know it - a dynamic that drives developer trust erosion across every security program operating this way.

The Boundary Between Findings and Proof

Over time, the industry has introduced a range of approaches to address this gap. Correlation platforms aggregate signals across tools to improve prioritization. Bug bounty programs and continuous penetration testing introduce external perspectives. Risk-based scoring systems attempt to quantify likelihood and impact. Shifting security left aims to prevent issues before they reach production.

Each of these improves some aspect of the problem. None eliminates it.

At the center is a boundary that remains intact.

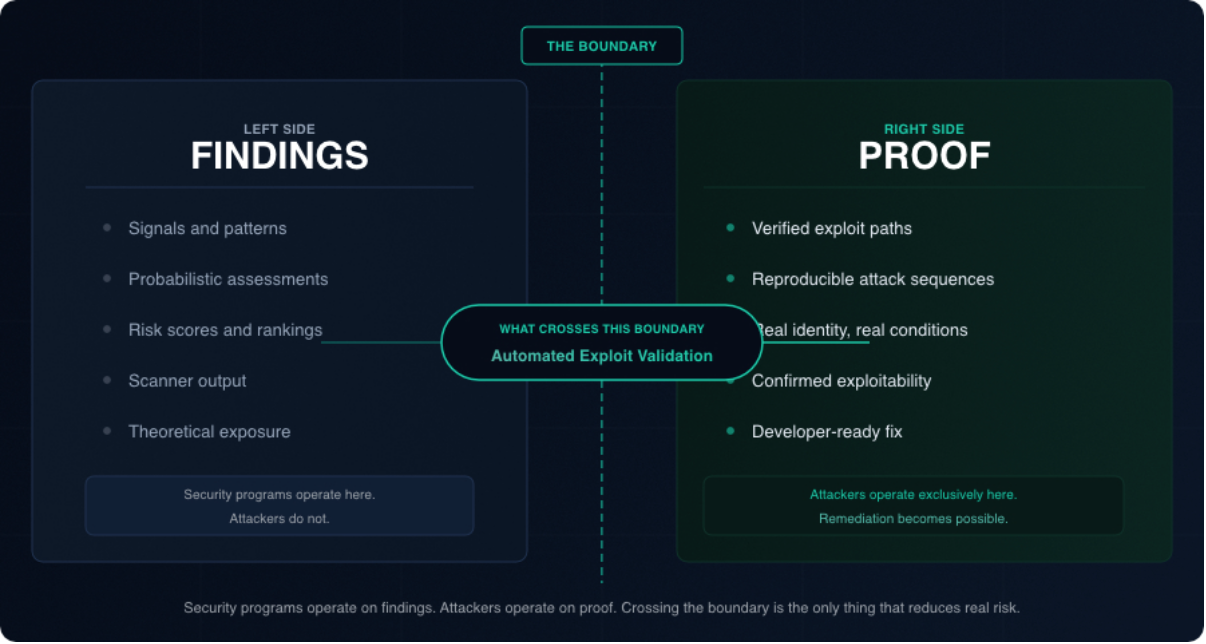

On one side: findings. Signals, patterns, and probabilistic assessments of risk. On the other side: proof. A concrete, reproducible sequence of actions that demonstrates how a vulnerability can be exploited in a live system.

Security programs largely operate on the side of findings. Attackers operate exclusively on the side of proof.

What crosses that boundary is Automated Exploit Validation: the process of taking a hypothetical threat and determining, through execution in a live runtime context, whether it represents a real, exploitable attack path. Not a simulation. Not a theoretical model. Validation against the system as it runs, under real identities and real conditions.

When exploitability is confirmed this way, everything downstream changes. Remediation is no longer speculative. Engineers know exactly what sequence of actions produces the undesired outcome, and the fix is targeted precisely at breaking that sequence. That specificity is what closes backlogs. That is what gets security work prioritized above the next sprint's feature work.

As long as the boundary between findings and proof remains uncrossed, the gap between detection and actual risk will persist.

Runtime as the Source of Truth

This boundary exists because there is a fundamental difference between how systems are described and how they behave. Code, specifications, and test cases represent intended behavior. Runtime reflects actual behavior under real conditions.

Runtime is where identities are resolved and propagated, where authorization logic executes across multiple layers, and where state evolves through sequences of interactions. It is the only environment in which the full set of system behaviors can be observed.

This is why modern vulnerabilities, particularly those involving authorization and business logic, are difficult to detect through static or surface-level testing. They do not arise from isolated defects. They arise only when identity, state, and sequence collide in the live system. A static scanner sees a 'save' function; only runtime validation sees that User A is using that function to overwrite User B's data - a class of flaw known as Broken Object Level Authorization. No test case predicted it. No scan flagged it. It only becomes visible when the right identity makes the right sequence of requests against a system carrying real state.

When a breach is analyzed, the exploit is always grounded in runtime behavior. Not a theoretical condition. A reproducible path that worked against the system as deployed.

The Question Most Programs Cannot Answer

Security programs are typically measured by findings volume, coverage, and time to remediation - none of which can confirm whether a system is actually secure by design. These metrics provide useful operational visibility. They are indirect measures of security. Here is what they actually tell you, and what they miss:

The question that actually matters is one most programs cannot answer today:

How many exploitable paths currently exist in your environment, and how quickly are they being closed?

Most teams don't know. They know how many findings are open. They know scan coverage percentages. They do not know how many of those findings represent conditions an attacker could actually use right now, in the live system, to cause real harm.

That gap, between what programs measure and what attackers pursue, is where risk lives. A program that cannot answer the exploitability question is not controlling risk. It is approximating it.

Conclusion

In an environment where AI writes code faster than humans can review it, the volume of vulnerabilities entering production is not going to slow down. The economics of software development have changed permanently. Security programs that rely on reviewing everything, testing everything, and fixing everything will fall further behind. Not because of execution failures, but because the model is wrong.

Runtime is the last line of defense that cannot be bypassed by volume. An attacker operating against a live system faces the same runtime constraints as everyone else. The exploit path either works or it does not.

The shift that matters is from visibility to protection. Visibility tells you where weaknesses might exist. Protection means you have confirmed which ones are exploitable and eliminated them before an attacker finds them first.

Add one sentence before this - The mechanism that operationalises this shift is Semantic Runtime Validation - continuous, automated, and integrated into the pipeline before the incident that would have required it. If an AI agent is going to fix code, it needs a failing test case, a concrete, reproducible proof of exploitation, to know whether the fix actually worked. The validated exploit path is that test case. It defines the problem precisely, guides the remediation, and confirms the outcome. Without it, AI-assisted fixing is as speculative as AI-assisted scanning: fast, but not grounded in what the live system will actually allow.

When AI writes the code and the AI Security Engineer assists with fixes, runtime validation is the mechanism that makes the feedback loop real. It is the only control that holds.

Attacks happen at runtime. The programs built to prevent them have to start there.

Take control of your Application and API security

See how Aptori’s award-winning, AI-driven platform uncovers hidden business logic risks across your code, applications, and APIs. Aptori prioritizes the risks that matter and automates remediation, helping teams move from reactive security to continuous assurance.

Request your personalized demo today.