Artificial intelligence is rapidly transforming the way software is built. Modern AI code generation tools allow developers to create functions, APIs, and entire application layers in seconds, dramatically accelerating development velocity and changing the economics of software engineering. For organizations focused on innovation and rapid product delivery, this new capability is enormously attractive because it allows teams to build more features, explore more ideas, and ship code faster than ever before.

However, this acceleration has an unintended consequence that security teams are only beginning to recognize - and it is reshaping why approaches like Semantic Runtime Validation focus on proving exploitability rather than generating more alerts.

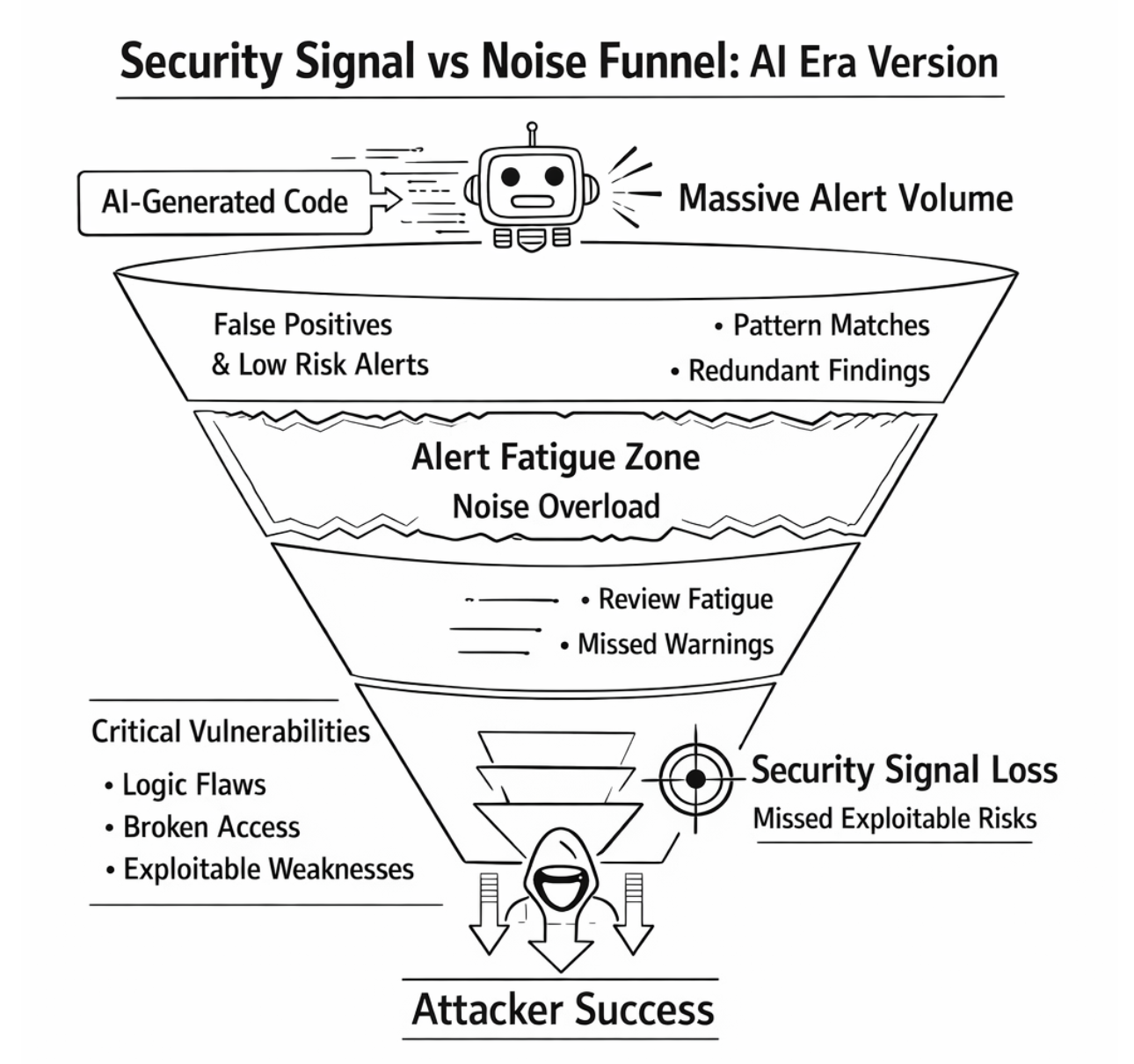

While AI systems dramatically increase the speed of software creation, they also increase the volume of security alerts generated by application security tools. In many organizations this phenomenon is creating what can best be described as the Alert Fatigue Multiplier Effect, a situation where the pace of AI-generated code amplifies the number of vulnerabilities reported by security scanners to the point that security teams struggle to keep up with the volume of findings.

The result is a paradox that many organizations are now experiencing.

Software development is becoming faster and more efficient, yet security programs are becoming increasingly overwhelmed by alerts.

The AI Acceleration of Code Production

Large language models trained on billions of lines of code now act as coding assistants that can generate working software components with minimal developer effort. Instead of manually writing each function, developers can describe the intended behavior and allow the AI system to produce the implementation. As a result, the bottleneck of software creation is no longer the speed at which developers type code, but the speed at which they can review and integrate generated output.

This shift has several important implications for application security.

First, the volume of code entering repositories is expanding dramatically. Teams that previously introduced incremental code changes now generate entire services or API layers within a single development cycle. The number of files, endpoints, and execution paths within modern applications grows accordingly.

Second, AI-generated code frequently mirrors patterns found in open source training data. Although the generated output is often syntactically correct and functionally valid, it may also include implementation shortcuts, insecure defaults, or repeated coding patterns that appear across many examples in public repositories.

Third, developers tend to trust AI-generated code because it compiles and appears logically correct. As a result, generated code may receive less scrutiny than manually written implementations, particularly when development timelines are aggressive.

From a security perspective, this combination introduces a larger and more complex codebase into production environments while increasing the likelihood that hidden weaknesses remain embedded within the generated logic.

Why AI-Generated Code Amplifies Security Alerts

Most application security tools rely on pattern recognition to identify potential vulnerabilities. Static analysis tools search for code structures associated with known weaknesses, dynamic scanners probe applications for behaviors that resemble attack signatures, and dependency scanners flag libraries with known vulnerabilities.

When the size of the codebase increases, the number of detected findings naturally increases as well. However, AI code generation introduces an additional dynamic that amplifies this effect.

Because AI systems frequently reproduce similar implementation patterns across multiple files and services, security scanners encounter the same structural patterns repeatedly. A single coding approach generated across dozens of endpoints may trigger the same security rule multiple times, resulting in hundreds or even thousands of alerts that represent variations of the same underlying condition.

These findings are often technically valid according to the detection rules used by the scanner.

However, they are not always exploitable within the context of the application.

The structural roots of this problem run deeper than AI code generation alone - they are explored in the analysis of the false positive crisis in AppSec tools, and in the broader argument about how AI code generation is changing the economics of AppSec.

The Alert Fatigue Multiplier Effect

When large volumes of AI-generated code meet pattern-based security scanners, the number of reported findings increases at a rate that far exceeds the capacity of security teams to triage them effectively.

The sequence typically unfolds in a predictable way. AI tools accelerate code production, which expands the surface area analyzed by security scanners. Pattern-based detection rules then identify thousands of potential issues across the expanded codebase, producing a flood of alerts that security teams must review and interpret. Developers encounter repeated warnings that often lack clear exploitability context, and over time their confidence in security tooling begins to decline.

As the number of alerts increases, the signal-to-noise ratio deteriorates.

Security teams spend an increasing portion of their time evaluating alerts rather than addressing meaningful vulnerabilities, while developers begin to treat scanner findings as friction rather than guidance. Eventually organizations reach a point where investigating alerts requires more effort than fixing the vulnerabilities that actually matter.

This is the operational reality of alert fatigue - and the starting point of developer trust erosion that eventually makes security alerts invisible to engineering teams.

Why Traditional Security Scanners Struggle in the AI Era

Traditional application security scanners were designed for an environment in which software evolved incrementally and codebases grew at a manageable pace. Under those conditions, security findings appeared gradually enough that teams could triage alerts and prioritize remediation effectively.

AI-driven development disrupts this model.

When thousands of lines of code can appear in a repository within hours, scanners produce findings at a rate that human security teams cannot realistically evaluate. Yet the deeper challenge is not simply the volume of alerts.

The fundamental limitation lies in the way most scanners reason about vulnerabilities.

Pattern-based security tools are effective at identifying possible weaknesses, but they rarely determine whether those weaknesses are actually exploitable within the context of the application. For example, a scanner may detect a potential injection point because user input flows into a database query, yet it cannot easily determine whether authorization policies, parameterization mechanisms, or business logic constraints prevent exploitation.

Without contextual reasoning, scanners treat every potential weakness as equally important, which inevitably produces noise.

The Real Risk: Security Signal Loss

The most dangerous consequence of alert fatigue is not the additional work it creates for security teams.

The greater danger is security signal loss, a situation in which critical vulnerabilities become difficult to distinguish from the background noise generated by automated scanning tools.

When thousands of alerts compete for attention, the vulnerabilities that truly matter can become buried within the noise. Security teams may overlook a serious business logic flaw hidden among hundreds of low-risk findings, while developers may begin ignoring scanner results entirely because previous alerts proved to be false positives.

In this environment attackers gain an advantage.

Attackers do not search for patterns in source code.

They search for weaknesses in how systems behave.

Logical vulnerabilities such as broken authorization controls, object-level access flaws, and workflow manipulation often require reasoning about the interaction between identities, data objects, and application logic. These vulnerabilities rarely appear clearly in traditional scanner outputs and therefore become more difficult to identify when alert fatigue dominates the security workflow.

Moving Beyond Pattern-Based Security Detection

As AI accelerates the pace of software development, security strategies must evolve beyond traditional pattern-based detection models.

The next generation of application security testing focuses on behavioral validation, an approach that evaluates how applications behave when users interact with them rather than simply scanning code for potentially dangerous patterns.

Behavioral validation explores workflows, examines authorization relationships, and evaluates how identities interact with application objects during real interactions. By modeling system behavior, security tools can determine whether vulnerabilities are truly exploitable rather than merely possible.

This shift dramatically improves the signal-to-noise ratio because it prioritizes vulnerabilities based on their real-world impact rather than theoretical risk.

Securing AI-Generated Code with Aptori

Platforms such as Aptori are designed to address the limitations of traditional security testing in modern development environments. Rather than relying solely on pattern detection, Aptori applies semantic analysis and runtime exploration techniques that model how applications behave during actual interactions.

By analyzing identities, objects, workflows, and authorization relationships, the platform can determine whether security controls are truly enforced as intended. This allows security teams to identify vulnerabilities that are genuinely exploitable while dramatically reducing the number of false positives generated by traditional scanners.

In environments where AI-generated code is accelerating development velocity, this type of contextual validation becomes essential.

For a concrete look at how behavioral validation works in practice, see how Semantic Runtime Validation detects BOLA and authorization flaws - the exact class of behavioral vulnerability AI-generated code most commonly introduces.

Because the future of application security is not about generating more alerts. It is about identifying the vulnerabilities that truly matter.

Take control of your Application and API security

See how Aptori’s award-winning, AI-driven platform uncovers hidden business logic risks across your code, applications, and APIs. Aptori prioritizes the risks that matter and automates remediation, helping teams move from reactive security to continuous assurance.

Request your personalized demo today.